How AI Learns

A couple weeks ago, we explored why AI sometimes hallucinates by making up facts with surprising confidence.

That sparked a great question from several of you: How exactly does AI learn in the first place?

At its core, training AI is about finding patterns in massive amounts of data. The AI doesn't actually "understand" anything the way we do. Instead, it creates mathematical representations of everything it sees. Imagine, for instance, that I asked you to describe the difference between a cat and a dog, you'd might mention fur, ears, behavior. An AI turns everything into numbers. Really, really specific numbers.

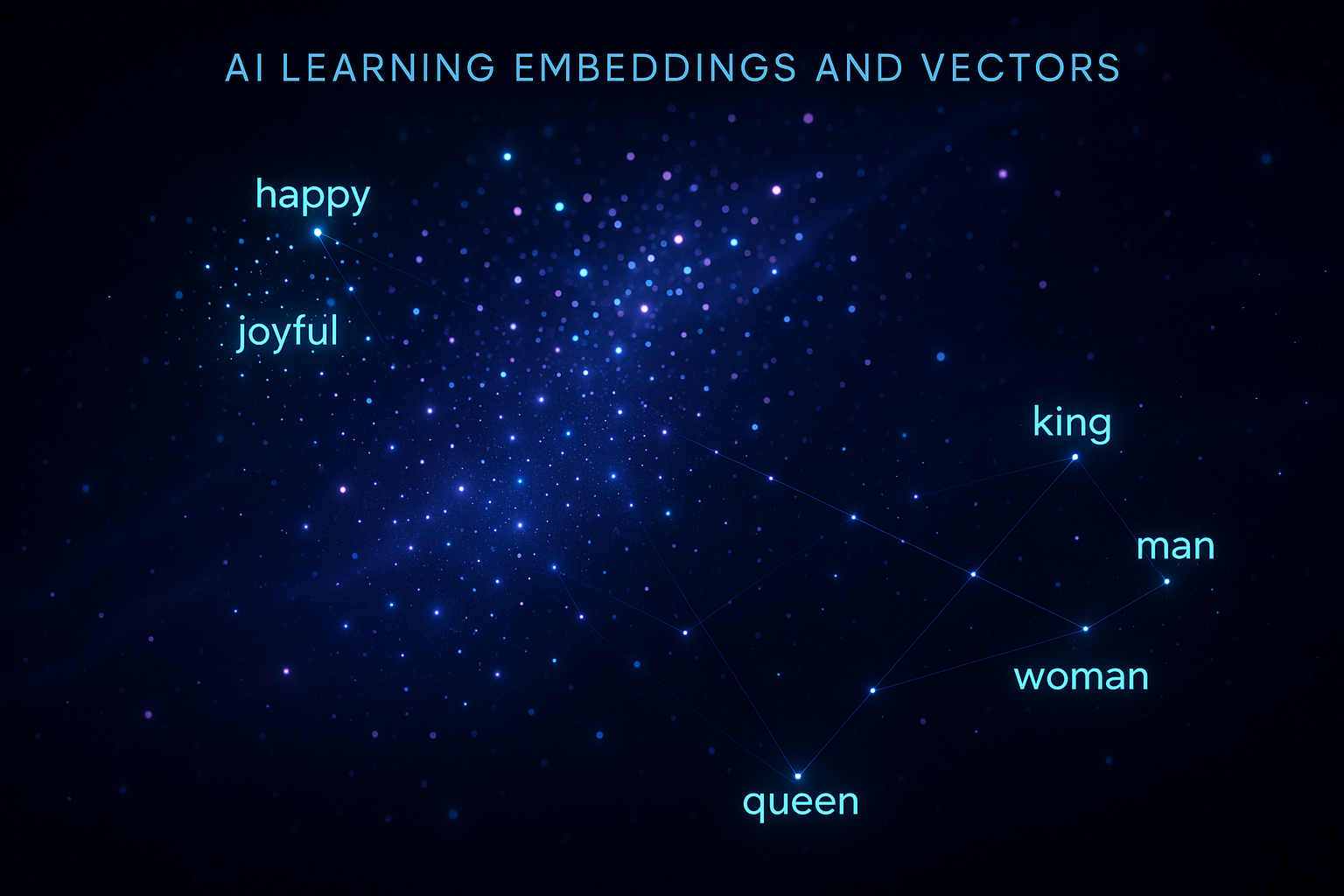

These numbers aren't random though, they are what is called embeddings. An embedding is a way to represent words, sentences, or even images as lists of numbers that capture relationships and meaning. Imagine you're trying to organize every word in the English language on a giant map. Words that mean similar things would be close together. "Happy" might be near "joyful," while "car" would hang out near "vehicle" and "automobile." Now imagine this map has not just two dimensions, but hundreds or even thousands. That's essentially what embeddings do, they place concepts in a vast mathematical space where distance equals difference in meaning.

The AI figures out these relationships on its own. Nobody tells it that "king" minus "man" plus "woman" should equal "queen." It discovers these patterns just by analyzing millions of text examples. The mathematical positions (called vectors) naturally organize themselves in ways that capture these relationships.

A vector is similar to a GPS coordinate, but instead of just latitude and longitude, you might have 1,536 different dimensions. Each word or concept gets its own unique coordinate in this massive space. Words need all these dimensions because they're complicated. "Bank" could mean a financial institution or the side of a river. "Light" could be about weight, brightness, or even casual attitude. Each dimension helps capture a different aspect of meaning, context, and usage.

When you type a question into AI, it immediately converts your words into these vectors. Then it searches through its vast space of knowledge to find the most relevant vectors, which concepts and patterns best match what you're asking. The whole conversation happens in this mathematical space before getting translated back into the words you see on your screen.

So how does the AI learn to create these meaningful vectors in the first place? Imagine teaching a kid to recognize animals by showing them thousands of pictures. "This is a cat. This is also a cat. This one? Still a cat." Eventually, they figure out what makes a cat a cat. AI training is similar, but at an insane scale.

Language models (ChatGPT, Claude, Grok, Meta, Mistral, etc) are fed billions of sentences. The AI tries to predict what word comes next, checks if it was right, and adjusts its internal numbers—the vectors—to get better next time. Wrong guess? Adjust the vectors a tiny bit. Right guess? Those vectors were pretty good; keep them similar. Do this billions of times, and patterns emerge. The AI starts to "know" that "The cat sat on the..." probably ends with something like "mat" or "couch," not "purple" or "democracy."

This process, called backpropagation (basically learning from mistakes), fine-tunes millions or billions of parameters which are the internal settings that determine how the AI interprets and generates text. When you see a model described as "70B" or "405B," that B stands for billion, and it's referring to these parameters. So GPT-4 with its rumored 1.76 trillion parameters has 1,760 billion little knobs and dials that got adjusted during training. Each training cycle makes the vectors a little more accurate, the patterns a little clearer.

Understanding embeddings and vectors helps explain both AI's power and its limitations. When AI seems almost magical at understanding context and nuance, it's because those high-dimensional vectors captured incredibly subtle patterns from the training data. But when AI hallucinates or makes bizarre mistakes? Often it's because it's following mathematical patterns that seemed right in vector-space but don't actually make sense in reality. The AI doesn't truly "know" that Abraham Lincoln didn't have a Twitter account, it just knows that certain word patterns usually appear together based on its training.

Training AI is essentially about converting human knowledge into mathematical patterns, storing those patterns as vectors in high-dimensional space, and then using those patterns to generate responses. It's pattern matching at a scale and complexity that's hard for our brains to fully grasp.

The next time you interact with an AI tool, remember that you're not talking to something that "knows" things. You're interacting with an incredibly sophisticated pattern-matching system that's turned language into math and uses that math to predict what should come next.